As the robotics industry accelerates toward human-like dexterity and autonomy, a fundamental truth remains: machines lack the emotional volatility, cultural context, and irrational spark that fuels human conflict—and that absence is precisely why audiences still flock to watch people, not machines, compete in sports, esports, and even digital duels. This isn’t Luddite nostalgia; it’s a neuropsychological boundary hardwired into spectator engagement. While Boston Dynamics’ Atlas can now parkour and Tesla’s Optimus handles warehouse logistics, neither can replicate the trash-talk psychology of a basketball rivalry or the factional fury driving esports viewership. The gap isn’t in actuators or LLMs—it’s in the limbic system.

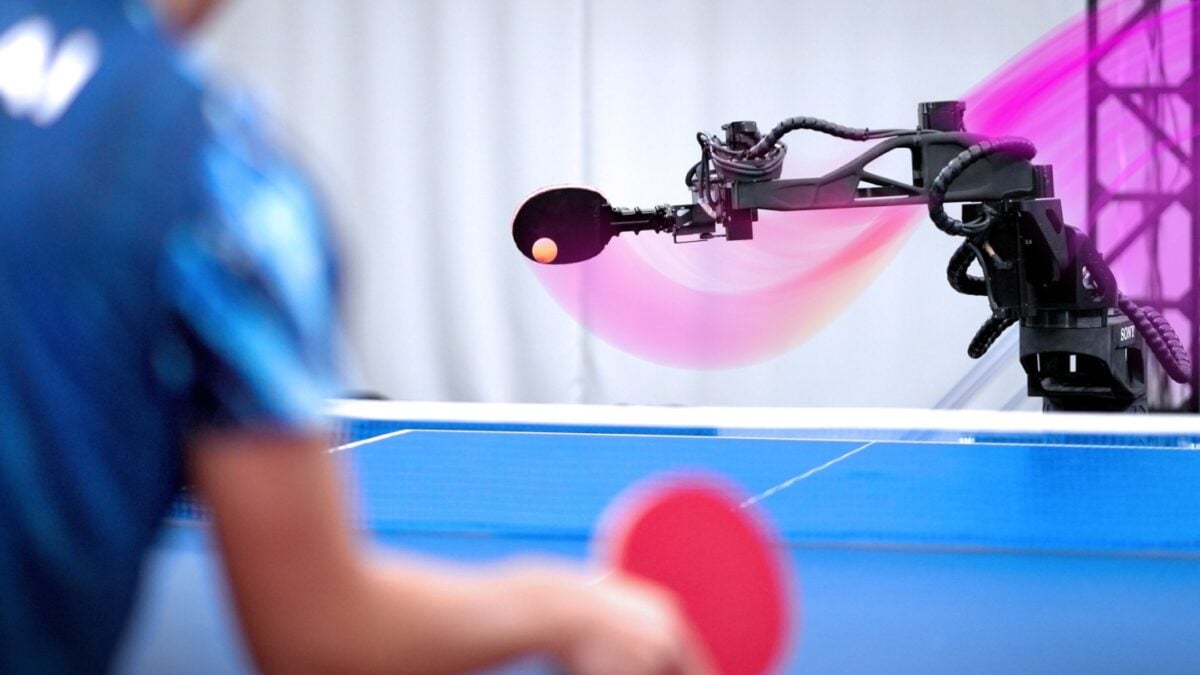

This distinction carries profound implications for AI-driven entertainment, military simulation, and even social robotics. When we strip away the myth of machine supremacy in domains requiring human unpredictability, we redirect innovation toward where robots truly excel: augmenting, not replacing, the human element. Consider the NBA’s recent trial of AI-assisted referee systems—still reliant on human judgment for foul calls involving intent and context. Or the rise of “centaur” esports teams, where human players employ AI agents for real-time strategy suggestions but retain full control over psychological warfare and tilt induction. These aren’t compromises; they’re recognitions of complementary strengths.

The Conflict Gap: Why AI Can’t Manufacture Genuine Rivalry

Human conflict in competitive settings isn’t merely about winning—it’s about status, identity, and emotional release. Neuroscientific studies show that watching rivals triggers mirror neuron activation and cortisol spikes in spectators, creating a shared physiological experience. Current AI agents, even those trained on millions of hours of human gameplay data, optimize for reward functions devoid of ego or spite. A 2025 study from Stanford’s Human-Centered AI Institute found that when AI agents were incentivized to “trash talk” in simulated matches, their outputs were linguistically fluent but semantically hollow—lacking the situational irony, cultural references, and escalatory tension that define human banter.

This isn’t a training data limitation; it’s a motivational architecture problem. Reinforcement learning models optimize for measurable outcomes (points, time, resource efficiency), not the messy, non-quantifiable drivers of human rivalry like pride, revenge, or belonging. As one researcher put it: “You can train an AI to mimic the form of an insult, but not the function—because the function requires a self that feels threatened.”

“We’ve seen teams try to deploy LLMs as virtual trash-talkers in gaming lobbies, but the effect falls flat. Players detect the absence of authentic emotional valence—it’s like hearing a comedian recite a joke they don’t understand. The laughter is polite, not visceral.”

Ecosystem Implications: Where Humans Still Own the Edge

This reality reshapes how we design human-AI collaboration systems. In military training simulations, for instance, the U.S. Army’s Synthetic Training Environment (STE) now uses AI-controlled “opfor” (opposing forces) for tactical drills—but deliberately inserts human role-players when simulating irregular warfare or information operations, where deception, cultural missteps, and emotional manipulation are key. The Army’s 2024 After-Action Report noted that units trained against pure AI opponents showed 22% lower adaptability when facing human adversaries in live exercises, precisely because the AI failed to replicate unpredictable human decision-making under stress.

In consumer robotics, companies like Figure AI and 1X are shifting narratives from “robot coworkers” to “robot assistants”—acknowledging that while machines can handle repetitive logistics, human supervisors remain essential for conflict resolution, morale management, and adaptive leadership. This mirrors the industrial cobot trend, where safety-rated collaborative robots (like those from Universal Robots) operate under strict ISO/TS 15066 guidelines, requiring human oversight for any task involving judgment or interpersonal dynamics.

The Strategic Opportunity: Augmenting, Not Replacing, Human Drama

Rather than chasing the elusive goal of AI-generated conflict, forward-thinking platforms are leveraging AI to amplify human-driven narratives. Twitch’s new “Rivalry Insights” feature, powered by a fine-tuned Llama 3 model, analyzes chat sentiment and gameplay patterns to highlight emerging tensions between streamers—then surfaces those moments to viewers with contextual overlays. It doesn’t create the drama; it makes human-generated conflict more visible, more accessible, and more engaging.

Similarly, in competitive programming, platforms like Codeforces now use AI to suggest “psychological openings” during live contests—subtle cues based on an opponent’s past behavior under pressure—not to replace the coder’s mind, but to inform their strategy. The human still makes the call; the AI just reduces the cognitive load of reading the room.

This approach respects the boundary: AI as a force multiplier for human expression, not a substitute for it. It also avoids the uncanny valley of artificial animosity, which risks eroding trust in both the technology and the spectacle it attempts to enhance.

“The most compelling AI-assisted competitions aren’t those where the machine tries to be human—they’re the ones where the machine helps humans be more themselves, more strategically, more emotionally.”

What So for the Future of Human-Machine Interaction

The takeaway isn’t technological pessimism—it’s clarity. By accepting that robots can’t replicate the messy, irrational, emotionally charged core of human conflict, we free ourselves to build systems that honor what each does best. Machines handle repetition, precision, and scale. Humans bring intention, irony, and the kind of conflict that makes us lean forward, hold our breath, and care about the outcome.

This understanding should guide everything from AI ethics frameworks to entertainment design: stop measuring machines by how well they imitate us, and start measuring them by how well they elevate us. In the arena, on the battlefield, and in the digital commons, the most valuable innovations won’t be those that make robots more human—but those that make humans more effective, more expressive, and more alive in their interactions with the machines they’ve built.