A new study published in Nature Neuroscience reveals that the human brain predicts upcoming words not by guessing the single next term like AI language models, but by analyzing grammatical chunks of language—offering fresh insight into how we process speech and comprehend language in real time.

How the Brain’s Word Prediction Differs from AI Language Models

Researchers from New York University, the Ernst Struengmann Institute for Neuroscience, and Zhejiang University found that even as large language models (LLMs) predict words based on statistical likelihood of the immediate next term, the human brain first organizes incoming speech into grammatical constituents—such as noun phrases or verb clauses—before predicting what word fits best within that structure. Using magnetoencephalography (MEG) and behavioral Cloze tests on Mandarin Chinese speakers, with validation in English-speaking cohorts, the team showed that neural responses to words varied significantly depending on their position within syntactic groups, not just their predictive entropy or surprisal scores. This challenges the long-held analogy that the brain functions like a next-word-prediction AI, suggesting instead a hierarchical, syntax-sensitive mechanism for language forecasting.

In Plain English: The Clinical Takeaway

- Your brain doesn’t guess words one at a time like autocomplete—it listens for meaningful chunks, like phrases, to predict what comes next.

- This explains why we can follow complex sentences even when words are ambiguous or spoken quickly.

- Understanding this mechanism may aid improve therapies for language disorders like aphasia or dyslexia.

Neural Mechanisms of Predictive Language Processing

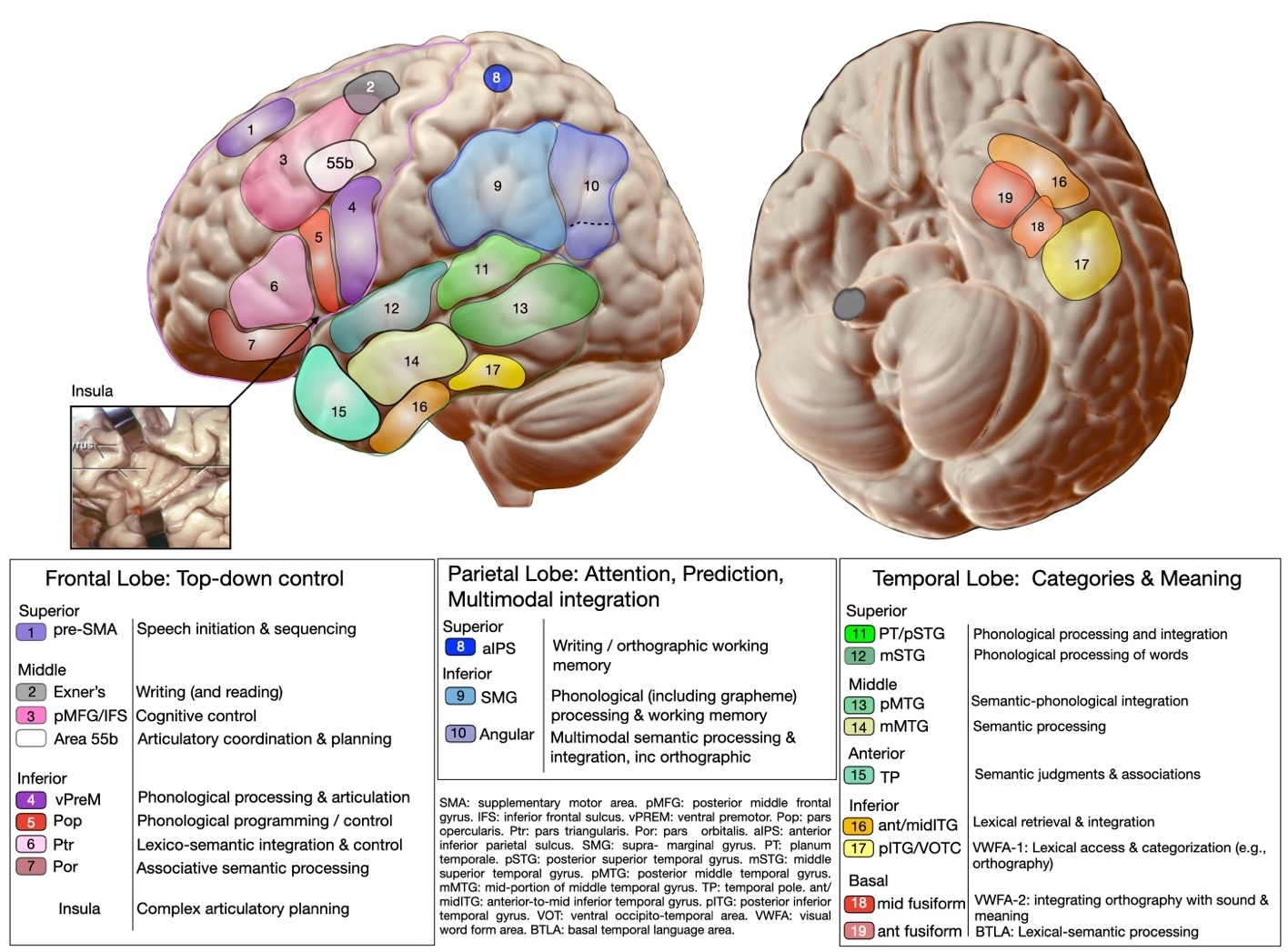

The study’s employ of MEG allowed researchers to track millisecond-by-millisecond changes in brain activity as participants listened to sentences. Unlike LLMs, which compute predictability uniformly across all word positions, the human brain showed heightened activity in the left inferior frontal gyrus and superior temporal gyrus when processing words at the boundaries of grammatical constituents—suggesting these regions act as syntactic integrators. This aligns with prior fMRI studies showing that the left hemisphere’s language network is sensitive to hierarchical structure, not just sequential probability (Pallier et al., PNAS, 2011). The findings imply that predictive coding in language is not merely statistical but deeply rooted in the brain’s capacity for abstract rule-based computation—a feature notably absent in current transformer-based AI architectures.

Geopolitical and Healthcare Implications

While this research is foundational, its implications extend to clinical neurorehabilitation and public health communication strategies. In the United States, the FDA has cleared several AI-assisted speech therapy tools under its Software as a Medical Device (SaMD) framework, but none currently incorporate syntactic constituency modeling. In the UK, the NHS Long Term Plan emphasizes early intervention for developmental language disorders, affecting over 10% of children nationwide—conditions where predictive processing deficits are increasingly implicated (Bishop, JCPP, 2017). In the European Union, the EMA has encouraged neuroscientific input into digital therapeutics for post-stroke aphasia, a condition affecting ~30% of stroke survivors in Western Europe (Indredavik et al., Stroke, 2008). This research supports refining such tools to mirror the brain’s natural hierarchical prediction strategy rather than mimicking AI’s linear approach.

Funding, Bias Transparency, and Expert Perspective

The study was funded by the National Institutes of Health (NIH Grant R01-DC004263), the National Science Foundation (NSF BCS-1724265), and the Alexander von Humboldt Foundation. No pharmaceutical or AI industry sponsors were involved, minimizing commercial bias. In a recent interview with the NIH Director’s Blog, Dr. David Poeppel emphasized that “the brain’s language prediction is not a statistical guessing game—it’s structured, rule-sensitive, and fundamentally linguistic.” Supporting this, Dr. Nai Ding of Zhejiang University noted in a 2024 press release that “cross-linguistic validation in English and Mandarin confirms this is a universal feature of human cognition, not an artifact of language-specific processing.”

“AI models impress us with fluency, but they lack the syntactic awareness that gives human language its flexibility and resilience. We’re not just predicting words—we’re building structures.”

— Dr. David Poeppel, Professor of Psychology and Neural Science, New York University

Comparative Processing: Brain vs. AI in Language Prediction

| Feature | Human Brain | Large Language Models (LLMs) |

|---|---|---|

| Prediction Unit | Grammatical constituents (phrases, clauses) | Single next word |

| Neural Basis | Left inferior frontal gyrus, superior temporal gyrus | Transformer attention layers |

| Sensitivity to Syntax | High—responds to phrase boundaries | Low—treats all words equally |

| Cross-Linguistic Consistency | Observed in Mandarin and English | Depends on training data distribution |

| Adaptation to Ambiguity | Uses context to resolve uncertainty via structure | Relies on statistical frequency alone |

Contraindications & When to Consult a Doctor

This research describes a fundamental aspect of healthy cognition and does not involve any intervention, treatment, or exposure that carries risk. There are no contraindications associated with this finding. However, individuals experiencing persistent difficulty understanding spoken language, following conversations, or finding words—especially if sudden or worsening—should consult a neurologist or speech-language pathologist. These symptoms may indicate underlying conditions such as aphasia, primary progressive aphasia, or cognitive impairment requiring formal evaluation. Early referral improves outcomes in post-stroke and neurodegenerative language disorders (ASHA, 2023).

Broader Significance and Future Directions

This work advances our understanding of human cognition by demonstrating that the brain’s predictive machinery is not a primitive statistical learner but a sophisticated syntactic engine. It cautions against overestimating the similarity between AI and human intelligence, particularly in language. Future research should explore whether individual differences in syntactic prediction correlate with literacy outcomes or susceptibility to neurodevelopmental disorders. Longitudinal studies using MEG in infants could reveal how this mechanism develops and whether early biomarkers exist for language-based learning disabilities. For now, the takeaway is clear: our brains don’t autocomplete—they understand.

References

- Poeppel, D., et al. (2026). Human language prediction relies on grammatical constituents, not just next-word statistics. Nature Neuroscience. Https://doi.org/10.1038/s41593-026-02272-6

- Pallier, C., et al. (2011). Cortical representations of the constituent structure of sentences. Proceedings of the National Academy of Sciences, 108(6), 2522–2527. Https://doi.org/10.1073/pnas.1018711108

- Bishop, D.V.M. (2017). Why is it so hard to improve our understanding of developmental language disorder? Journal of Child Psychology and Psychiatry, 58(3), 285–294. Https://doi.org/10.1111/jcpp.12658

- Indredavik, B., et al. (2008). Benefits of a multidisciplinary stroke unit: a randomized controlled trial. Stroke, 39(4), 1061–1066. Https://doi.org/10.1161/01.STR.0000309988.13180.5f

- American Speech-Language-Hearing Association. (2023). Practice Portal: Adult Aphasia. Https://www.asha.org/practice-portal/clinical-issues/adult-aphasia/