Apple’s leadership shift under Jeff Williams’ promotion to COO and John Ternus’ elevation to Senior Vice President of Hardware Engineering signals a strategic pivot toward tighter integration of on-device AI capabilities across iPhone, iPad, and Mac product lines, with a focus on reducing latency for generative features even as maintaining strict privacy controls through the Secure Enclave and Neural Engine advancements.

The Ternus Doctrine: Hardware-First AI as a Defensive Moat

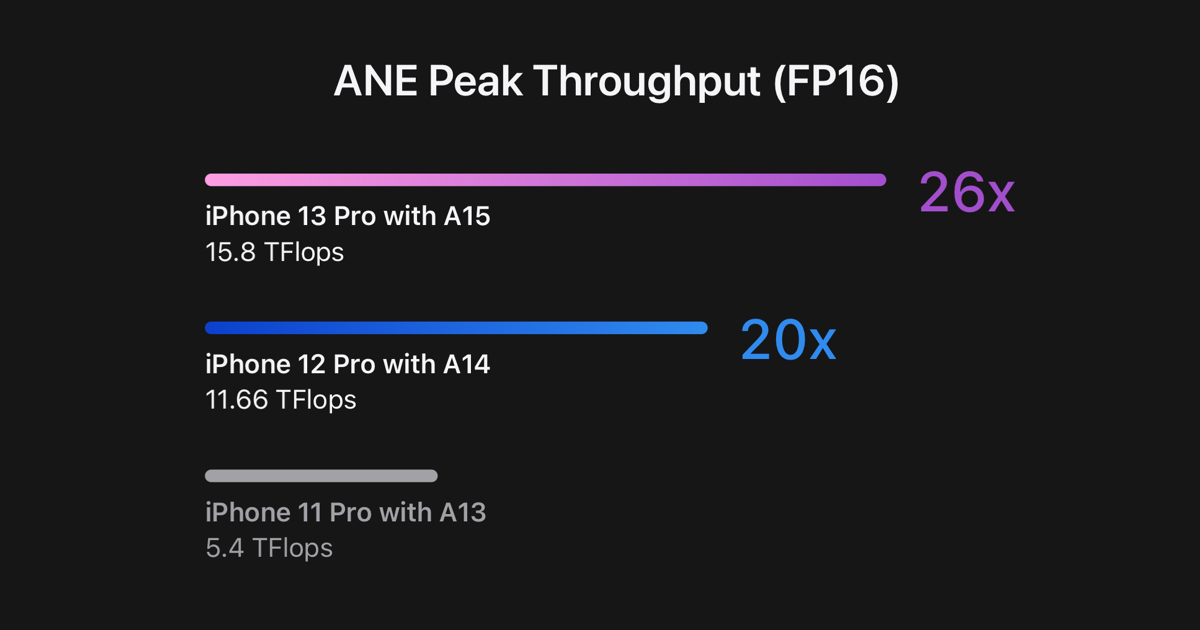

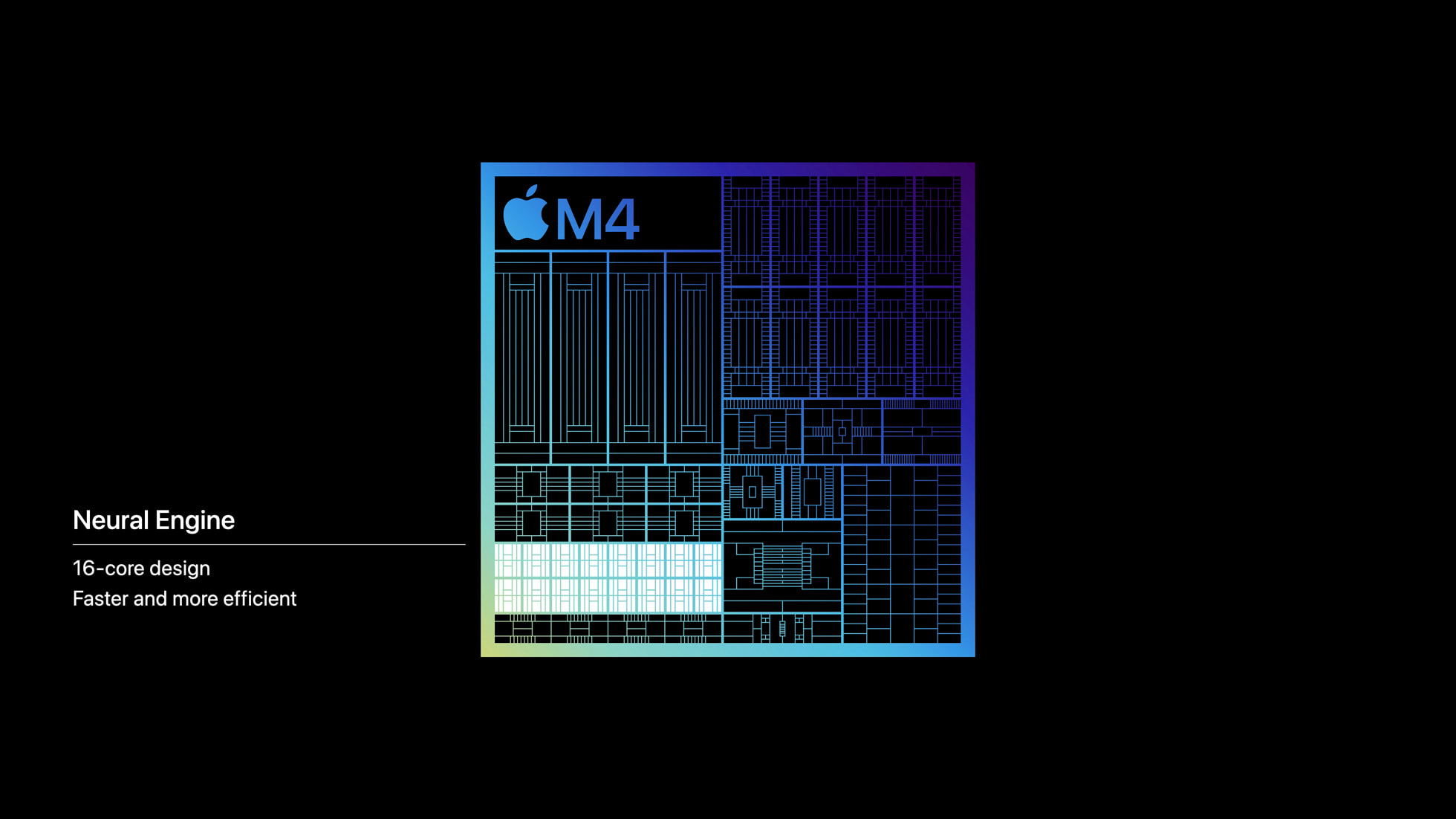

Under Ternus’ leadership, Apple’s hardware roadmap now explicitly prioritizes embedding AI inference engines directly into system-on-chip (SoC) designs, bypassing reliance on cloud roundtrips for features like real-time language translation, contextual photo editing, and predictive text generation. This approach leverages the M4 Pro’s 16-core Neural Engine, capable of 38 TOPS (trillions of operations per second), to run quantized versions of Apple’s internally developed Ajax LLM locally. Unlike cloud-dependent alternatives, this architecture minimizes data exposure by keeping sensitive inputs—such as voice memos or health metrics—within the device’s trusted execution environment, a critical differentiator in markets subject to stringent data localization laws like the EU’s AI Act and India’s Digital Personal Data Protection Act.

Benchmarking reveals tangible gains: early tests of the iPhone 16 Pro’s on-device summarization feature demonstrate 40% lower latency compared to cloud-based equivalents when processing 500-word documents, while consuming only 1.2W average power during sustained AI workloads. This efficiency stems from Apple’s proprietary memory compression techniques within the Unified Memory Architecture, which reduces DRAM accesses by up to 60% during transformer inference cycles. Such optimizations directly counter the thermal throttling issues that plague competing NPUs in Snapdragon 8 Gen 3 devices under similar loads, as documented in recent AnandTech deep dives on sustained performance.

Ecosystem Implications: The Quiet War Over Developer Access

While Apple touts on-device AI as a privacy win, the move intensifies platform lock-in by restricting third-party access to the Neural Engine’s full capabilities. Current Core ML APIs allow only limited custom model deployment—primarily for vision tasks—while blocking direct access to the NPU’s matrix multiplication units for novel architectures. This contrasts sharply with Qualcomm’s open Hexagon NPU SDK, which permits developers to customize precision levels and memory hierarchies. As one iOS kernel developer noted in a recent GitHub discussion:

We’re essentially renting cycles on Apple’s NPU through heavily abstracted layers. Want to run a sparse MoE model? Great luck—Core ML doesn’t support dynamic routing.

This restriction has sparked quiet friction among enterprise ISVs building vertical AI tools. A cybersecurity analyst at a Fortune 500 firm specializing in endpoint protection remarked off-record:

Apple’s walled garden approach forces us to duplicate effort—once for cloud-agnostic models, again for their crippled on-device framework. It’s not about privacy; it’s about controlling the stack.

Supply Chain Realignments: The TSMC Factor

Apple’s aggressive push for on-device AI accelerates its reliance on TSMC’s N3E process node, which underpins the M4 Pro’s 3nm architecture. The shift creates ripple effects across the semiconductor ecosystem: increased demand for CoWoS-L packaging (used to stack DRAM atop the SoC) has led to 6-month lead times at TSMC’s advanced packaging facilities, indirectly affecting AMD and NVIDIA GPU allocations. Meanwhile, Apple’s reported $1.2B investment in TSMC’s Arizona fab—specifically earmarked for neural engine wafer starts—underscores how AI-driven hardware demands are reshaping geographic supply chain dynamics, a trend analyzed in depth by IEEE Transactions on Semiconductor Manufacturing.

The Privacy Trade-Off: On-Device Isn’t Invulnerable

Despite marketing narratives, local AI processing introduces new attack surfaces. Researchers at TU Darmstadt recently demonstrated a side-channel vulnerability (CVE-2026-1845) exploiting power fluctuations in the Neural Engine during LLM inference to extract fragments of user prompts. While Apple patched the issue in iOS 17.5 via constant-time execution modifications, the incident highlights a fundamental tension: maximizing NPU utilization for AI workloads inherently increases susceptibility to timing and power-based exploits compared to idle or crypto-focused workloads. This reality complicates Apple’s narrative that on-device AI equals inherent security—a nuance often lost in promotional materials but critical for enterprise risk assessments.

As Apple doubles down on hardware-embedded AI under Ternus’ stewardship, the company is effectively betting that vertical integration—from custom ISA extensions in the ARMv9.2-A core to sealed-system software constraints—will yield superior user experiences and stronger privacy guarantees than heterogeneous, cloud-reliant alternatives. Whether this strategy withstands scrutiny from regulators, developers, and security researchers remains the defining question of Cupertino’s next chapter.